Bacalhau Project Report - Feb 10, 2023

On-prem streaming demo, state of open conference, and some IPFS challenges.

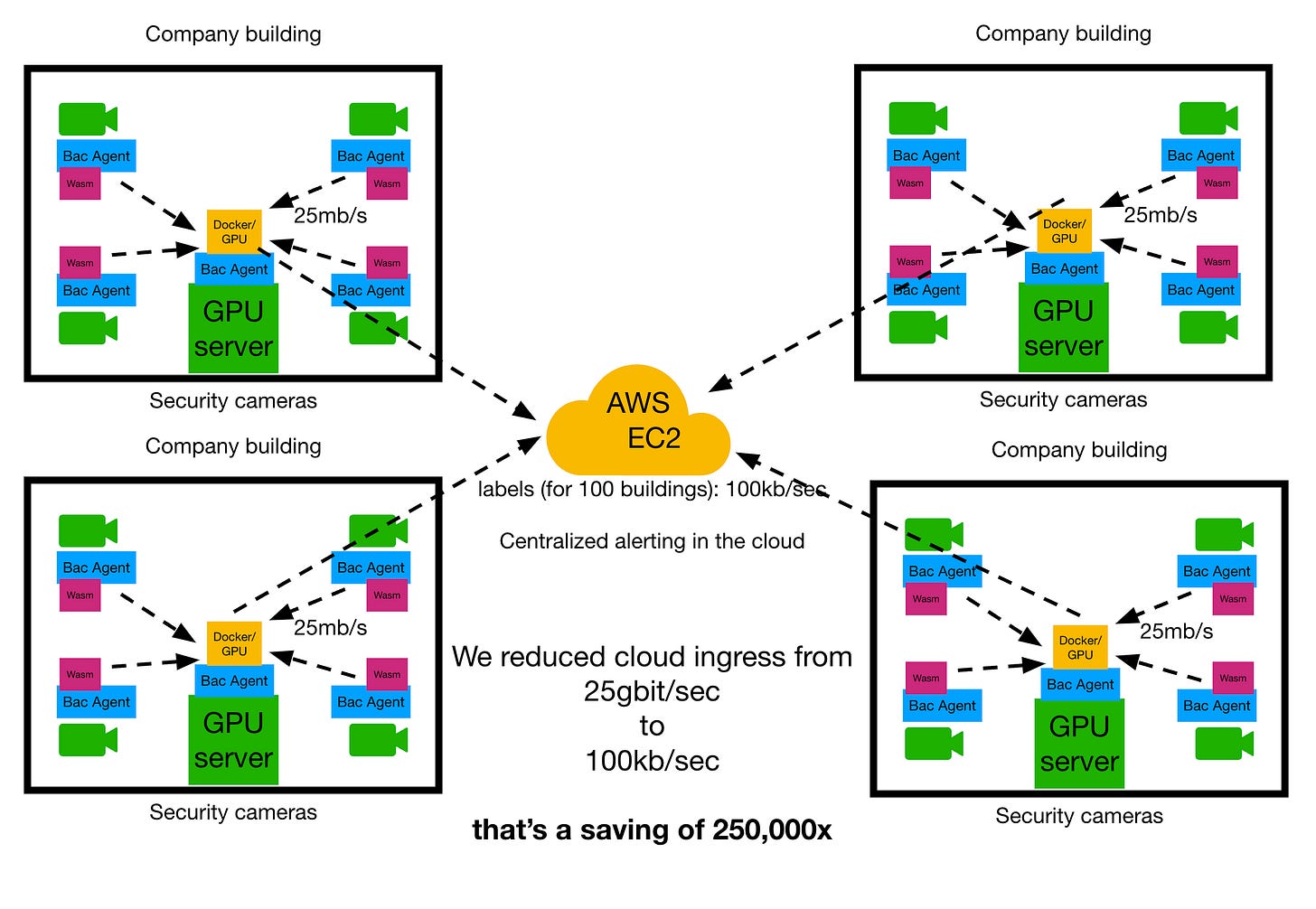

A lot of the team went to the amazing State of Open conference in London this week, and came away with loads of exciting ideas for developing Bacalhau for end users. We also landed a shiny new streaming demo which we’re pretty excited to share…

Bacalhau Streaming Demo 🏠🚀

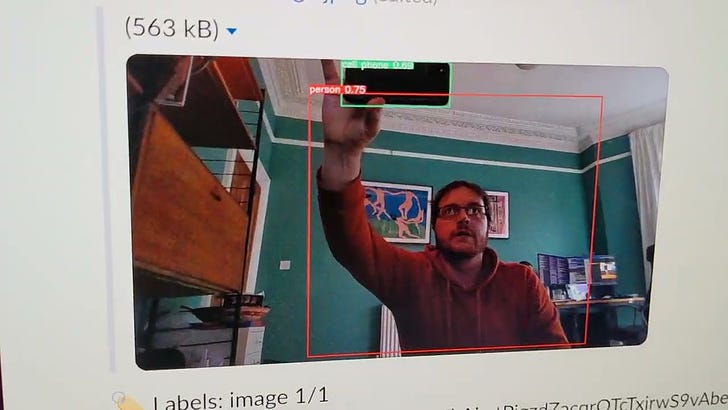

We’ve been working for a few weeks of a demo of a new streaming layer on top of the Bacalhau job scheduler. Here it is in action, being used to develop an example distributed data app: Wifi based intrusion detection!

What did we just see?

For starters, we have a PoC of the new Bacalhau Streaming layer with support for ingesting from local data sources on the nodes, shown in the orange box above. Then, we also have the demo itself, which is the code which reads images from the webcam and log lines from the wireless access point, and streams them to the inference server to run inference on the images when a new connection is detected to the wireless access point. What’s the point of this?

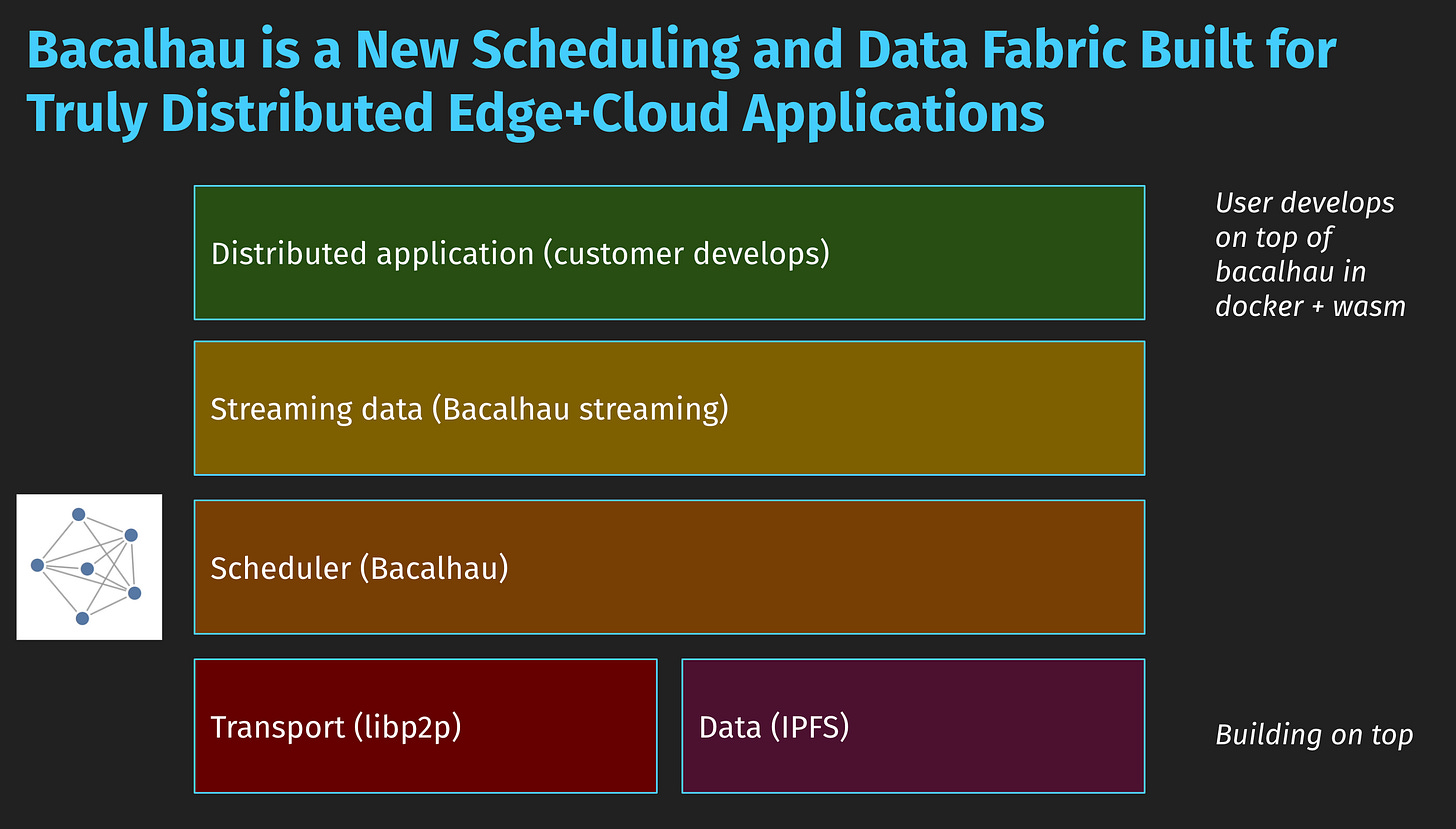

Architecture before

The architecture of such a system before Bacalhau might be to stream every one of 100 security cameras in your building up to the cloud, but that’s expensive…

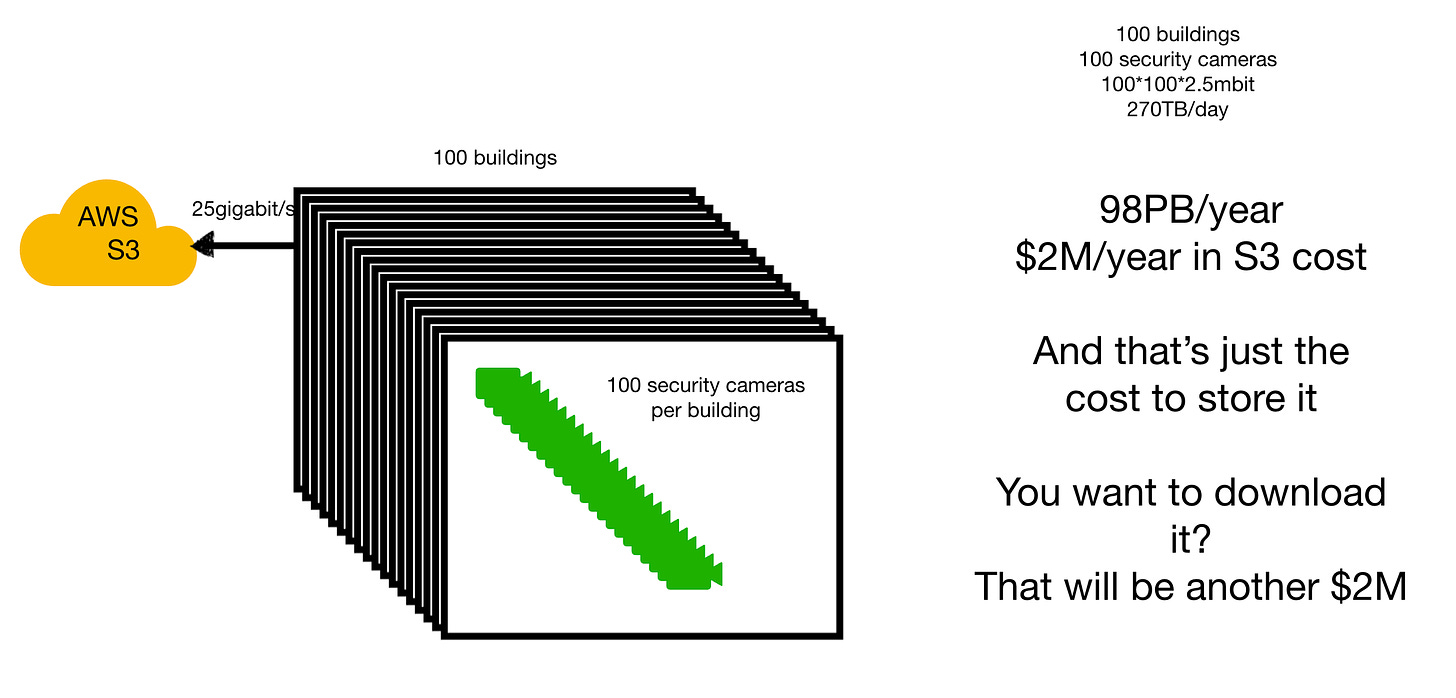

Architecture after

The architecture afterwards, moves the code to where the data is, and does local processing on the cameras themselves, in GPU nodes local to each building, and thereby saves a lot of ingress costs!

Specific details

We see here the details of how the three jobs are deployed to the three labelled Bacalhau nodes, two of which have the webcam and the access point data sources on, and the third of which has the powerful GPU node.

How does it work?

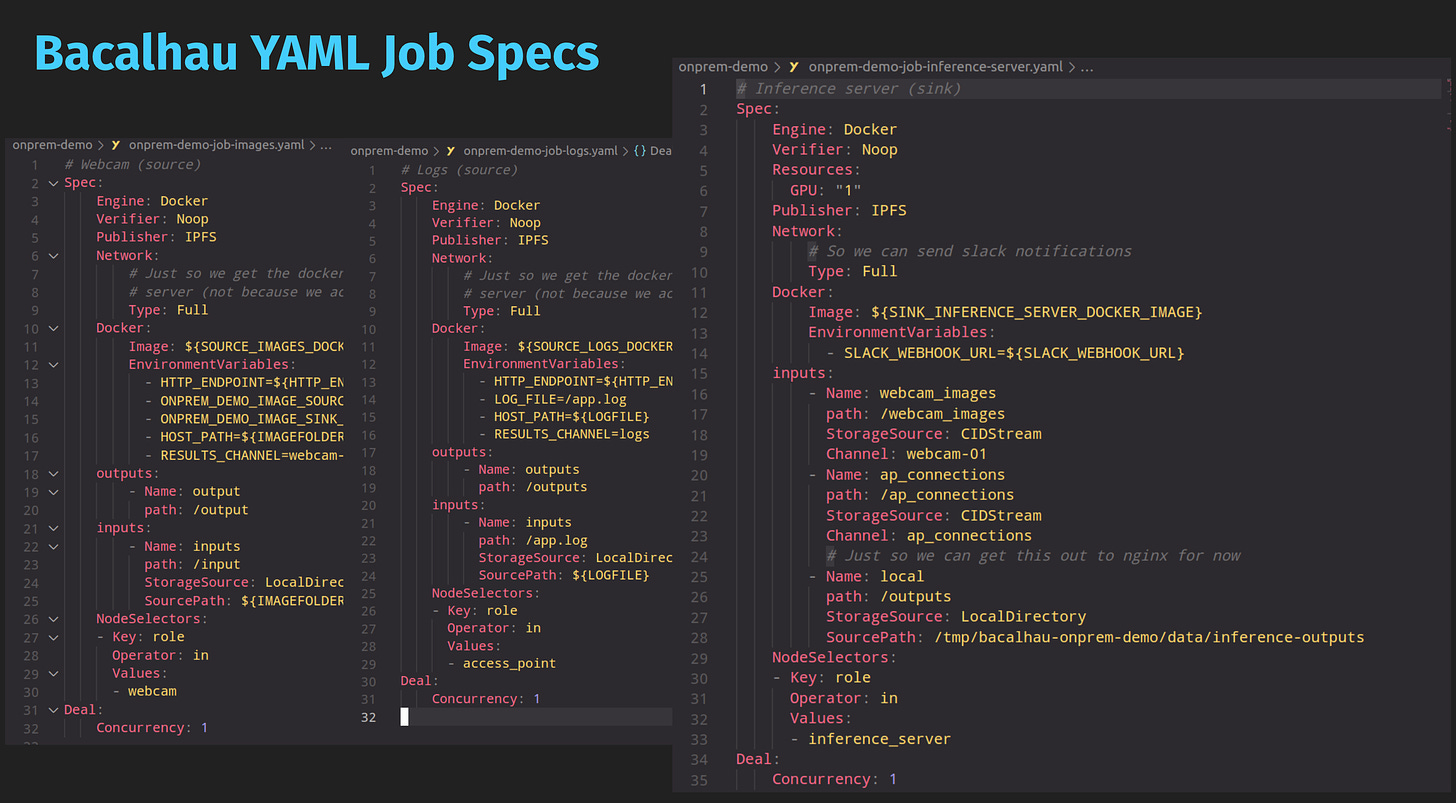

The Bacalhau job specs are shown below:

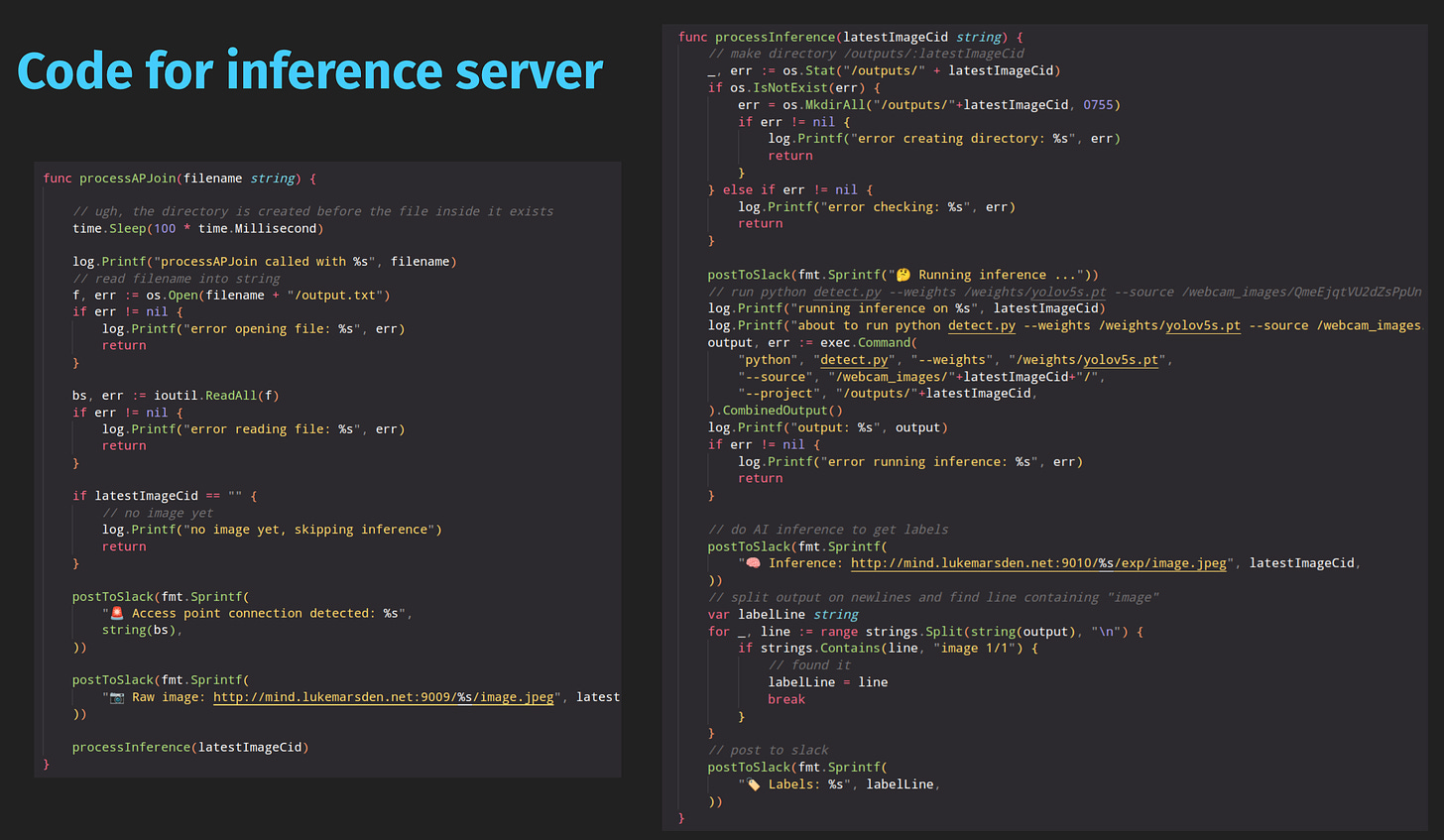

There’s a new LocalDirectory storage source which allows you to mount a local directory directly into a job. There’s then long-running jobs on each of the source nodes which read data as it changes in those local directories (reading new JPEGs from the webcam, and tailing the logfile of the access point), and pings the new Bacalhau streaming HTTP endpoint to say `/submit` every time there’s a new event, capturing the data as a CID. Those events are then subscribed to in the inference server job, with a new storage source CIDStream, which every time an event is sent by the first two jobs, downloads the referenced CID and writes it into a new directory.

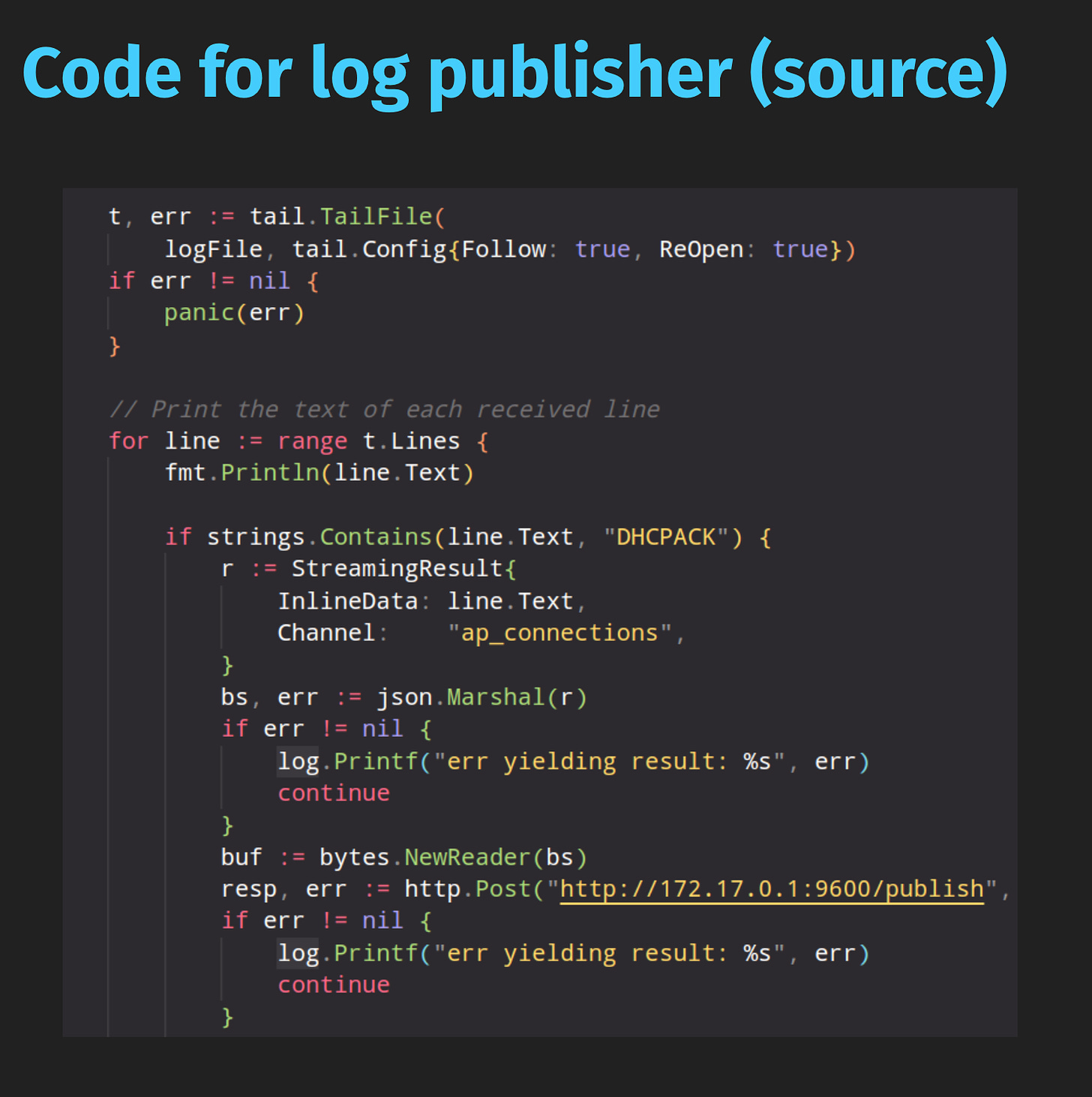

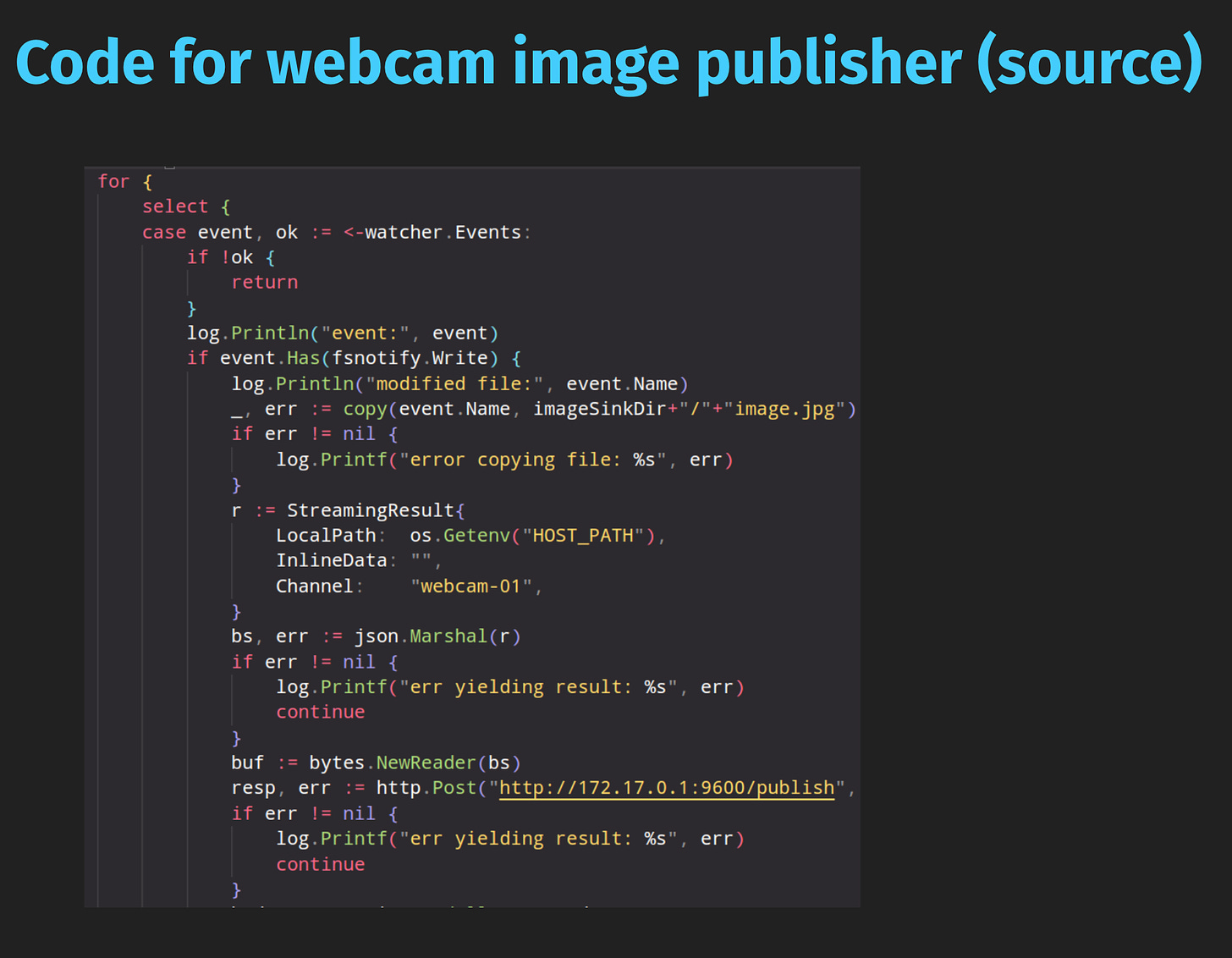

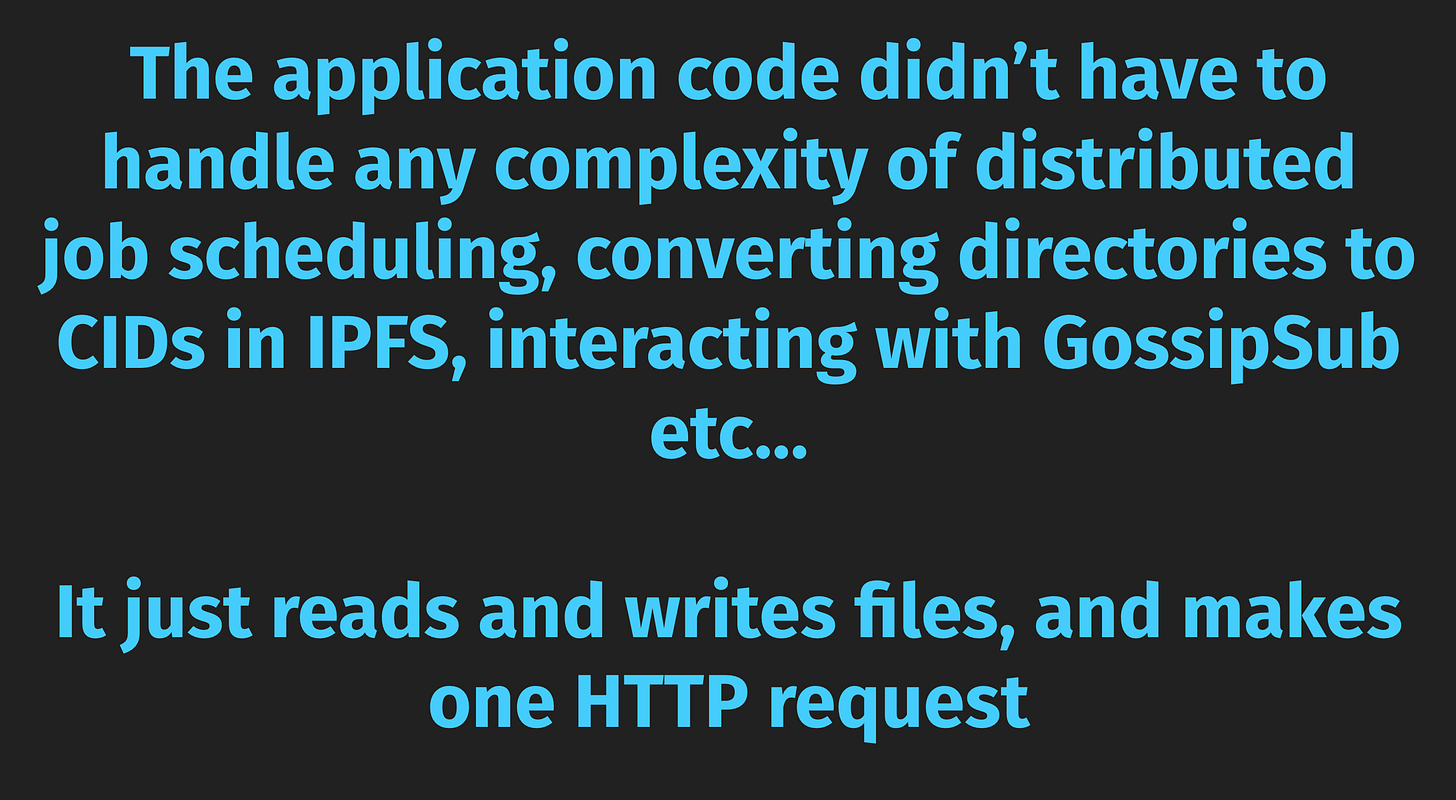

The user code itself is then simple!

All that the user code is doing is reading and writing files!

IPFS challenges 😬

We've been experiencing issues with a canary that submits a deterministic job to Bacalhau and times out when downloading ~60MB CID from IPFS. This has never been a stable canary, but the problem got really worse starting Jan 23rd that we had to disable alarming on the canary. Observations:

The canary either succeeds and downloads the content within 20 seconds, or just hangs and timesout eventually after our 5 minute timeout window

DHT showed over 2500 providers for the same CID in the network, which implies previous canaries might've broadcasted themselves as providers before going down

Actions:

Fixed a resource leak where the canary IPFS client stays alive even after the test is over

Implemented our own lite ipfs node with low power and transient repo configuration, but didn't help

Used IPFS peering to have sticky connections with Bacalhau IPFS nodes that have the CID

Changed canary to submit random jobs that generate new CID in each run to avoid routing the request to a non-available provider. This was tested but not merged

Reduced canary retries attempts to avoid failures making things worse and have concurrent canaries running on parallel

Pending Actions:

Upgrade to Kubo 1.81. They just fixed a dependency conflict and we are good to test this out

Deploy old Bacalhau and Canary versions in Staging to see if we have introduced a bug lately

We’re hoping to run this down as IPFS is a really key piece of technology in our stack!

What’s next? ⏩

Project Frog/Lilypad (FEVM integration with Bacalhau) demo

Progress on Filecoin Station integration

Start work on PoC of the brand new “Insulated jobs” – running jobs in a secure context with audited inputs/outputs

Questions/comments? Let us know!

Thanks for reading!

Your Humble Bacalhau Team